- #DIFFERENT VOICES FOR MICROSOFT SPEECH UPDATE#

- #DIFFERENT VOICES FOR MICROSOFT SPEECH SOFTWARE#

- #DIFFERENT VOICES FOR MICROSOFT SPEECH PROFESSIONAL#

Listen to any written materials in authentic voices while doing something else. Use this service to practice your listening and speaking skills, or master your pronunciation in foreign languages. Replay the text as many times as you wish. Choose the speech rate that works for you. Just type a word or a phrase, or copy-paste any text. The TTS service speaks Chinese Mandarin (female), Chinese Cantonese (female), Chinese Taiwanese (female), Dutch (female), English British (female), English British (male), English American (female), English American (male), French (female), German (female), German (male), Hindi (female), Indonesian (female), Italian (female), Italian (male), Japanese (female), Korean (female), Polish (female), Portuguese Brazilian (female), Russian (female), Russian (female), Spanish European (female), Spanish European (male), Spanish American (female). This natural sounding text to speech service reads out loud anything you like in a variety of languages and dialects in male and female voices.

#DIFFERENT VOICES FOR MICROSOFT SPEECH UPDATE#

Update March 24th, 2:33PM ET : This post has been updated with statements from Google and IBM.Text to Speech service in a variety of languages, dialects and voices.

#DIFFERENT VOICES FOR MICROSOFT SPEECH PROFESSIONAL#

The disparity could also harm these groups when speech recognition is used in professional settings, such as job interviews and courtroom transcriptions. They suggest these errors will make it harder for black Americans to benefit from voice assistants like Siri and Alexa. The researchers urge makers of speech recognition systems to collect better data on African American Vernacular English (AAVE) and other varieties of English, including regional accents. Recognition algorithms learn by analyzing large amounts of data a bot trained mostly with audio clips from white people may have difficulty transcribing a more diverse set of user voices. The Stanford paper posits that the racial gap is likely the product of bias in the datasets that train the system. The other companies mentioned in the paper did not immediately respond to requests for comment. “IBM continues to develop, improve, and advance our natural language and speech processing capabilities to bring increasing levels of functionality to business users via IBM Watson,” said an IBM spokesperson. “We’ve been working on the challenge of accurately recognizing variations of speech for several years, and will continue to do so.” “Fairness is one of our core AI principles, and we’re committed to making progress in this area,” said a Google spokesperson in a statement to The Verge. It’s important to note that these aren’t necessarily the tools used to build Cortana and Siri, though they may be governed by similar company practices and philosophies. In the Stanford study, Microsoft’s system achieved the best result, while Apple’s performed the worst.

#DIFFERENT VOICES FOR MICROSOFT SPEECH SOFTWARE#

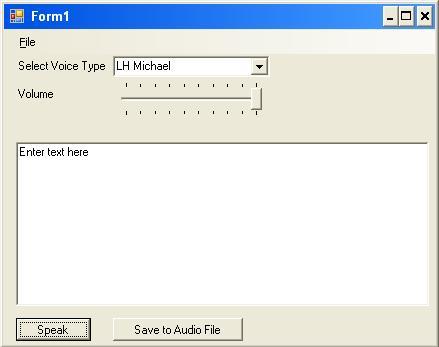

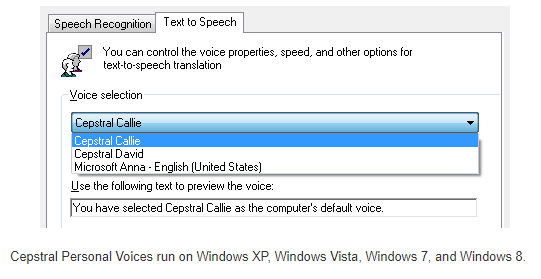

Another paper identified similar racial and gender biases in facial recognition software from Microsoft, IBM, and Chinese firm Megvii. An MIT study found that an Amazon facial recognition service made no mistakes when identifying the gender of men with light skin, but performed worse when identifying an individual’s gender if they were female or had darker skin. Now, you need to select a fitting combination of text-to-speech-library (male voice, female voice) and ensure, that you can have a fitting python wrapper library that enables you to use it. A quick search shows that there are indeed such wrapper libraries, for example 'Pyttsx'. Previous research has shown that facial recognition technology shows similar bias. A thing like that is called a 'wrapper library'. The errors were particularly large for black men, with an error rate of 41 percent compared to 30 percent for black women. Neural Text to Speech (Neural TTS), a powerful speech synthesis capability of Cognitive Services on Azure, enables you to convert text to lifelike speech which is close to human-parity. The system found 2 percent of audio snippets from white people to be unreadable, compared to 20 percent of those from black people.

The tools misidentified words about 19 percent of the time during the interviews with white people and 35 percent of the time during the interviews with black people. The researchers used voice recognition tools from Apple, Amazon, Google, IBM, and Microsoft to transcribe interviews with 42 white people and 73 black people, all of which took place in the US. Speech recognition systems have more trouble understanding black users’ voices than those of white users, according to a new Stanford study.